This is in continuation to yesterday’s post on T-SQL worst practices that affect the performance of the results returned/processed.

What have I done? T-SQL Worst Practices

I am attempting to summarize all of the worst T-SQL practices that Jacob demonstrated at Tech-Ed 2011 (India). The summarization has been done after classifying these practices into 3 major buckets:

- Practices that can affect accuracy of data returned/processed (Part 01 – read here)

- Practices that can adversely impact performance (Part 02 – read here)

- Practices that can have unpredictable execution side-effects (Part 03 – today’s post)

While the points covered are exactly the same as covered during the Tech-Ed, I have taken hints from Jacob’s queries and have come up with my own versions using the AdventureWorks2008R2 database supplied as a sample database for SQL 11 (“Denali”) CTP01. In cases where I am directly using Jacob’s scripts, I have mentioned it as such.

Part 03 – Practices that contribute to execution errors

The previous two sessions focused on the efficiency and accuracy of the application. However, errors (logical or otherwise) during execution are a nightmare for technical support and any team assigned the task of maintaining the product after it’s release. Even worse is when these execution errors in the code are sporadic. Today, in the final part of this series, we will look at some of the coding practices which might result in an unstable product.

Using Ordinal positions in the ORDER BY clause

Manager: I need a list of the first and last initials of all contacts in our database. Can you do that?

You: Sure.That’s quite easy. Here you go:

USE [AdventureWorks2008R2]

GO

SELECT LEFT(p.FirstName, 1) AS FirstInitial,

LEFT(p.LastName, 1) AS LastInitial

FROM Person.Person p

GO

Manager: But, I want it sorted by the First Initial.

You: No issues. It’s just a small change.

USE [AdventureWorks2008R2]

GO

SELECT LEFT(p.FirstName, 1) AS FirstInitial,

LEFT(p.LastName, 1) AS LastInitial

FROM Person.Person p

ORDER BY 1

GO

Manager: That was fast! But, there might be situations where I need to sort it by the Last Name.

You: Okay, it looks like we will need to go beyond simple ad-hoc queries. I will create a stored procedure for you.

USE [AdventureWorks2008R2]

GO

CREATE PROCEDURE GetContacts

(

@Sort VARCHAR(20)

)

AS

BEGIN

SELECT LEFT(p.FirstName, 1) AS FirstInitial,

LEFT(p.LastName, 1) AS LastInitial

FROM Person.Person p

ORDER BY CASE @Sort WHEN 'first' THEN 1

ELSE 2

END

END

GO

Manager (in the morning the very next day): The stored procedure you gave me yesterday does not work. Fix it and see that you always test your code before submitting to me.

You: How is this possible? Today is going to be a bad day. I missed the bus, and now my manager is angry at me. I must do something quickly……Let me try this:

SELECT LEFT(p.FirstName, 1) AS FirstInitial,

LEFT(p.LastName, 1) AS LastInitial

FROM Person.Person p

ORDER BY FirstInitial

GO

You: Okay. This works, so let me put it inside the stored procedure to fix the issue that my manager is encountering.

ALTER PROCEDURE GetContacts

(

@Sort VARCHAR(20)

)

AS

BEGIN

SELECT LEFT(p.FirstName, 1) AS FirstInitial,

LEFT(p.LastName, 1) AS LastInitial

FROM Person.Person p

ORDER BY CASE @Sort WHEN 'first' THEN FirstInitial

ELSE LastInitial

END

END

GO

When you execute the ALTER statement to begin your unit test:

Msg 207, Level 16, State 1, Procedure GetContacts, Line 10

Invalid column name ‘FirstInitial’.

Msg 207, Level 16, State 1, Procedure GetContacts, Line 11

Invalid column name ‘LastInitial’.

You (dumb-struck): ?? What is going on? Nothing is working as expected…

Actually, it’s not your fault entirely. It’s the way expressions are evaluated when used in the ORDER BY clause. When used in the ORDER BY clause, expressions need to be computed before-hand. In your case, you are using a column alias, which does not exist yet and hence the error. Here’s what you can do to resolve the ugly situation:

ALTER PROCEDURE GetContacts

(

@Sort VARCHAR(20)

)

AS

BEGIN

SELECT LEFT(p.FirstName, 1) AS FirstInitial,

LEFT(p.LastName, 1) AS LastInitial

FROM Person.Person p

ORDER BY CASE @Sort WHEN 'first' THEN LEFT(p.FirstName, 1)

ELSE LEFT(p.LastName, 1)

END

END

GO

Here’s the result – it works!

Maintaining Integrity during transactions

At the end of the day, anything and everything within a database is all about maintaining data integrity. Microsoft SQL Server ensures this and also provides all the basic components to the development community so that they can ensure that errors during code execution are handled properly and roll-backs issued so that anything that is inconsistent is not committed to the database.

However, we as developers tend to ignore a couple of things, or take things for granted – one of them is the fact that Microsoft SQL Server leaves the user to decide whether or not it should automatically abort a transaction in the case of an error.

Let’s set the stage by assuming that we have a table called Customers and have some basic data in it.

/*-----------------------------------------------------------------------------

Date : 23 March 2011

SQL Version : SQL Server 2005/2008/Denali

Author : Jacob Sebastian

Email : jacob@beyondrelational.com

Twitter : @jacobsebastian

Blog : http://beyondrelational.com/blogs/jacob

Website : http://beyondrelational.com

Summary:

This script is part of the demo presented by Jacob Sebastian during

Tech-ED India 2011 on "TSQL Worst Practices".

Disclaimer:

THIS CODE AND INFORMATION ARE PROVIDED "AS IS" WITHOUT WARRANTY OF

ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING BUT NOT LIMITED

TO THE IMPLIED WARRANTIES OF MERCHANTABILITY AND/OR FITNESS FOR A

PARTICULAR PURPOSE.

-----------------------------------------------------------------------------*/

USE TechEd;

GO

IF OBJECT_ID('UpdateCustomer') IS NOT NULL DROP PROCEDURE UpdateCustomer;

GO

IF OBJECT_ID('Customers','U') IS NOT NULL BEGIN

DROP TABLE Customers

END

GO

CREATE TABLE Customers (

CustID INT IDENTITY,

CustName VARCHAR(100),

CustCity VARCHAR(100),

LastOrder DATETIME

)

GO

INSERT INTO Customers (CustName, CustCity)

SELECT 'Jacob', 'Ahmedabad' UNION ALL

SELECT 'Pinal', 'Bangalore'

Now, let’s create a stored procedure to update the Customers table.

USE TechEd;

GO

CREATE PROCEDURE UpdateCustomer(

@CustID INT,

@SalesAmount MONEY

)

AS

BEGIN TRY

BEGIN TRAN

UPDATE Customers SET LastOrder = GETDATE() WHERE CustID = @CustID

UPDATE customerss SET

SalesAmount = SalesAmount + @SalesAmount

WHERE CustID = @CustID

COMMIT TRAN

END TRY

BEGIN CATCH

IF @@TRANCOUNT > 0

ROLLBACK TRAN

PRINT 'Error Number: ' + CAST (ERROR_NUMBER() AS VARCHAR)

END CATCH

GO

Note that although we have an incorrect table name in the stored procedure, it was successfully created. (Do not go and correct it – this incorrect table name is intentional).

Now, in a SQL Server Management Studio window, run the following query. You will encounter a fatal error mentioned below – fatal enough for SQL Server to stop execution then and there. This is expected and not an accident.

EXEC UpdateCustomer 1, 100

(1 row(s) affected)

Msg 208, Level 16, State 1, Procedure UpdateCustomer, Line 13

Invalid object name ‘customerss’.

Msg 266, Level 16, State 2, Procedure UpdateCustomer, Line 13

Transaction count after EXECUTE indicates a mismatching number of BEGIN and COMMIT statements. Previous count = 0, current count = 1.

Then, run the below query in another SQL Server Management Studio query window.

SELECT * FROM customers

You will notice that the execution continues without producing any results for a long time.

So, what’s the problem?

The problem here is that although the execution of the stored procedure encountered an error, the transaction that we had started was never aborted or rolled-back. As a result, the Customers table remained under lock, causing the SELECT to wait indefinitely. As proof, you may run the following on query window #1:

SELECT @@trancount

ROLLBACK TRAN

You will see that we have one open transaction. Issuing the rollback will cause query window #2 to return results.

What’s the worst that can happen? Well, that depends on your definition of “worst”. According to me, you are already in big trouble – your code just broke. However, if that is not serious enough, your table is now under lock until and unless and external force (i.e. manual ROLLBACK) is applied (almost like Newton’s laws, isn’t it?). This can result in your entire application becoming unusable and ultimately a “site-down” (or P1) support call with the customer (and your manager) screaming on you.

Solution #01: Create a wrapper stored procedure

One of the solutions is to simulate what we just did, i.e. create a wrapper stored procedure, which would be able to:

- detect that the underlying procedure call failed

- check if there are any open transactions

- If yes, rollback all open transactions

We can implement this as under:

USE TechEd;

GO

BEGIN TRY

EXEC UpdateCustomer 1, 100

END TRY BEGIN CATCH

IF @@TRANCOUNT > 0 ROLLBACK TRAN

PRINT 'Transaction Rolled Back'

END CATCH

GO

Try it out. It works, but there is a problem. In an enterprise class system, we may have hundreds of stored procedures – and it is simply not practical to have wrappers on each.

Solution #02: SET XACT_ABORT ON

This solution is actually, quite simple (and what I would recommend). All we need to tell Microsoft SQL Server is to automatically issue a ROLLBACK if the transaction encounters a fatal error.

All that one needs to do is to use a SET option called XACT_ABORT and set it to ON. (BOL link here)

USE TechEd;

GO

ALTER PROCEDURE UpdateCustomer(

@CustID INT,

@SalesAmount MONEY

)

AS

SET XACT_ABORT ON

BEGIN TRY

BEGIN TRAN

UPDATE Customers SET LastOrder = GETDATE() WHERE CustID = @CustID

UPDATE customerss SET

SalesAmount = SalesAmount + @SalesAmount

WHERE CustID = @CustID

COMMIT TRAN

END TRY

BEGIN CATCH

IF @@TRANCOUNT > 0

ROLLBACK TRAN

PRINT 'Error Number: ' + CAST (ERROR_NUMBER() AS VARCHAR)

END CATCH

GO

Now, repeat the exercise of executing the following in two separate query editor windows. Notice that this time, we do not encounter locking and the second query succeeds.

EXEC UpdateCustomer 1, 100

-- run in another session

SELECT * FROM customers

Summarizing – Part 03:

Finally, summarizing all the points that have been discussed today (Part 03) and during Tech-Ed 2011

- Always test your code before it leaves your table! Although things “should” work, they don’t do so just that one time when you don’t test

- If an ordinal position is specified in an expression, it may not produce the expected results

- If an expression is used in ORDER BY, it will be evaluated when FILTERS are evaluated

- Always use SET XACT_ABORT ON in stored procedures to rollback open transactions in case of unhandled errors

Thank-you, Jacob, for this really great session!

You can read my memories of Tech-Ed by going through the following series:

Tech Ed 2011 (India) – Day 01 – Connect, Learn, Explore, Evolve!

Tech Ed 2011 (India) – Day 02 – IE 9 – India launch – Fast is now beautiful!

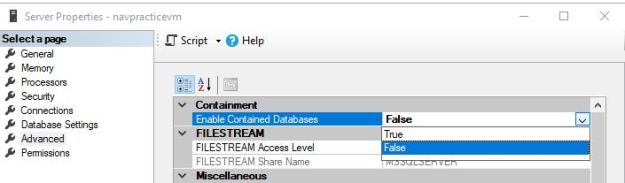

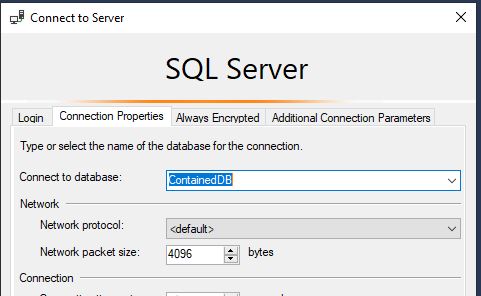

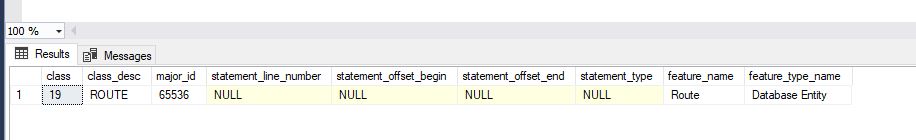

Tech Ed 2011 (India) – Day 03 – For the DBAs – Contained Databases, Extended Events, Virtualization, Wait Stats, Wait Queues & High Availability

Until we meet next time,

Be courteous. Drive responsibly.

Want to ask me a question? Do so at: http://beyondrelational.com/ask/nakul/default.aspx